1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

|

import os

import torch.autograd

import torch.nn as nn

from torchvision import datasets

from torchvision import transforms

from torchvision.utils import save_image

if not os.path.exists('./img'):

os.mkdir('./img')

def to_img(x):

out = 0.5 * (x + 1)

out = out.clamp(0, 1)

out = out.view(-1, 1, 28, 28)

return out

batch_size = 128

num_epoch = 100

z_dimension = 100

img_transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5,), (0.5,))

])

mnist = datasets.MNIST(

root='./data/', train=True, transform=img_transform, download=True

)

dataloader = torch.utils.data.DataLoader(

dataset=mnist, batch_size=batch_size, shuffle=True

)

class discriminator(nn.Module):

def __init__(self):

super(discriminator, self).__init__()

self.dis = nn.Sequential(

nn.Linear(784, 256),

nn.LeakyReLU(0.2),

nn.Linear(256, 256),

nn.LeakyReLU(0.2),

nn.Linear(256, 1),

nn.Sigmoid()

)

def forward(self, x):

x = self.dis(x)

return x

class generator(nn.Module):

def __init__(self):

super(generator, self).__init__()

self.gen = nn.Sequential(

nn.Linear(100, 256),

nn.ReLU(True),

nn.Linear(256, 256),

nn.ReLU(True),

nn.Linear(256, 784),

nn.Tanh()

)

def forward(self, x):

x = self.gen(x)

return x

D = discriminator()

G = generator()

if torch.cuda.is_available():

D = D.cuda()

G = G.cuda()

criterion = nn.BCELoss()

d_optimizer = torch.optim.Adam(D.parameters(), lr=0.0003)

g_optimizer = torch.optim.Adam(G.parameters(), lr=0.0003)

for epoch in range(num_epoch):

for i, (img, _) in enumerate(dataloader):

num_img = img.size(0)

img = img.view(num_img, -1)

real_img = img

real_label = torch.ones(num_img)

fake_label = torch.zeros(num_img)

real_out = D(real_img)

d_loss_real = criterion(real_out, real_label)

real_scores = real_out

z = torch.randn(num_img, z_dimension)

fake_img = G(z).detach()

fake_out = D(fake_img)

d_loss_fake = criterion(fake_out, fake_label)

fake_scores = fake_out

d_loss = d_loss_real + d_loss_fake

d_optimizer.zero_grad()

d_loss.backward()

d_optimizer.step()

z = torch.randn(num_img, z_dimension)

fake_img = G(z)

output = D(fake_img)

g_loss = criterion(output, real_label)

g_optimizer.zero_grad()

g_loss.backward()

g_optimizer.step()

if (i + 1) % 100 == 0:

print('Epoch[{}/{}],d_loss:{:.6f},g_loss:{:.6f} '

'D real: {:.6f},D fake: {:.6f}'.format(

epoch, num_epoch, d_loss.data.item(), g_loss.data.item(),

real_scores.data.mean(), fake_scores.data.mean()

))

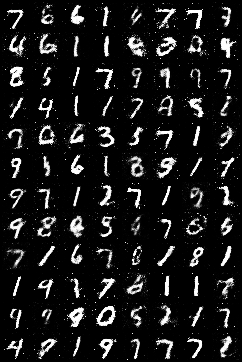

if epoch == 0:

real_images = to_img(real_img.cpu().data)

save_image(real_images, './img/real_images.png')

fake_images = to_img(fake_img.cpu().data)

save_image(fake_images, './img/fake_images-{}.png'.format(epoch + 1))

torch.save(G.state_dict(), './generator.pth')

torch.save(D.state_dict(), './discriminator.pth')

|